The table of content

Introduction

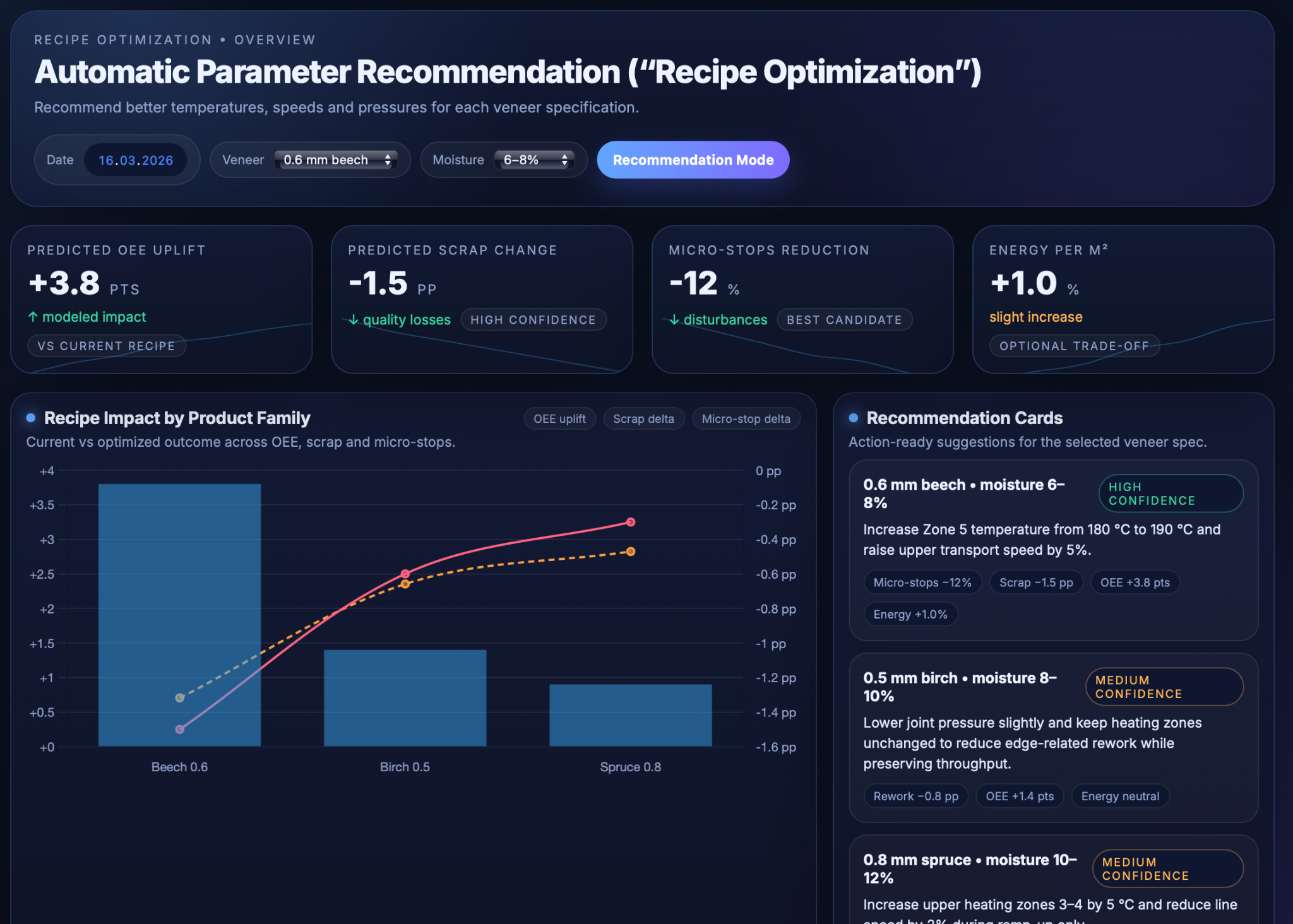

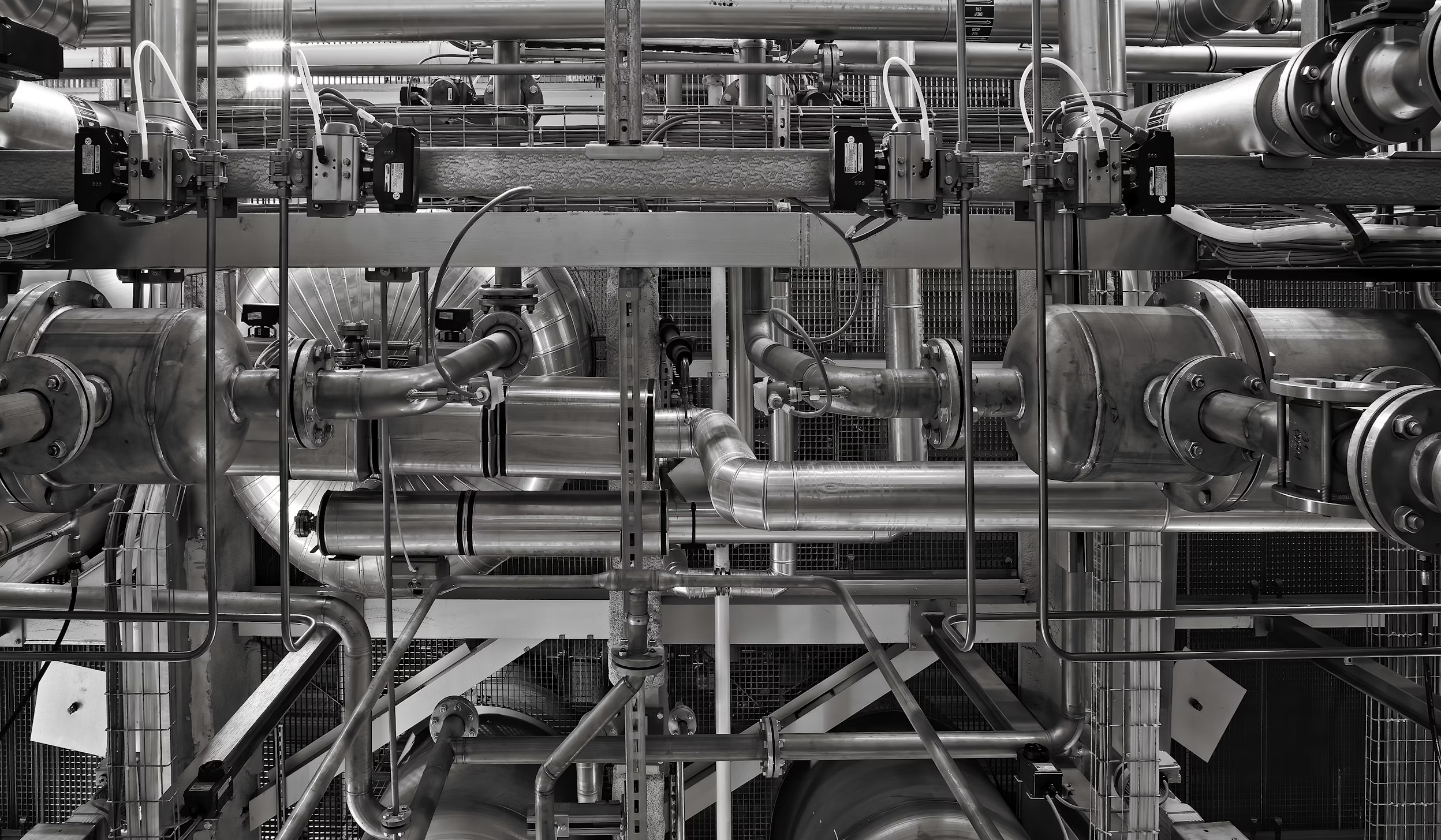

The purpose of this use case is to automatically recommend better process “recipes” for the production line so that temperatures, speeds, pressures, and other settings are tuned for each veneer or product type. Instead of relying only on fixed standard recipes and slow trial‑and‑error, the system learns from everyday production data which combinations of parameters deliver good results and which ones cause scrap, micro‑stops, or unstable quality. Over time, it becomes a decision‑support tool that suggests targeted adjustments rather than broad, risky experiments.

“Recipe optimization” is needed because modern lines run many veneer species, thicknesses, and moisture ranges, each of which can react differently to the same temperature or speed. A recipe that works well for one product can cause micro‑stops, higher scrap, or unnecessary energy use for another. Manually exploring all possible parameter combinations is unrealistic, especially when production schedules are tight. By treating each run as a structured experiment and using data‑driven models, the solution can search for better settings in a systematic and efficient way while respecting safety and process limits.

The key objectives are to treat routine production as a continuous learning loop, to use Bayesian optimization and regression models to find improved parameter sets with minimal extra trials, and to translate the results into simple, operator‑friendly suggestions such as “increase Zone 5 to 190 °C and raise upper transport speed by 5%.” Operators see clear recommendations and expected impact; technologists and process engineers get deeper insight, guardrails, and control over which recipes become the new standard; and continuous‑improvement or management teams gain a transparent link between recipe changes and shifts in OEE, scrap, and energy.

Data Used

The recipe optimization uses a rich set of production and quality data so that each run becomes a learnable experiment rather than just a result. By combining raw sensor signals, derived speed metrics, recipe flags, and outcome KPIs, the system can link “how we ran” to “what we got” for every veneer specification.

Analog signals: temperatures,

pressures, thickness profiles

Key continuous signals are collected from along the production line and aggregated per run and veneer type. These include thickness sensor readings at the former clipper, which are turned into thickness profiles for each veneer/product type and run, as well as temperature setpoints and actual values for all heating zones (upper and lower). Glue pressure and joint pressure signals (for example, glue heating pressure and joint press pressure) are also captured, providing a detailed picture of the thermal and mechanical conditions that each veneer sheet experiences.

Derived speeds, timings

and cycle‑time distributions

Beyond raw sensor values, the system derives effective speeds and timings from the sequence of sensors from infeed through cutting and transport to stacking. Time stamps between key sensors are converted into segment speeds and cycle times per veneer segment, order, and product. Instead of storing only a single average, the solution keeps distributions (means, percentiles, standard deviations) of cycle times and speeds within each run, which makes it possible to distinguish truly stable operation from noisy or highly variable conditions.

Binary / discrete settings that define the recipe

Binary and discrete settings capture the machine modes that are essential parts of the recipe but are not visible in analog trends. Typical examples are creep versus normal speed selections, head‑lift positions, the state of alignment pads (extended or retracted), and other on/off or enumerated flags that influence how veneer is transported and joined. Together with numeric parameters, these mode flags form the full “recipe vector” that feeds the optimization and regression models.

Outcomes and context: OEE, scrap,

micro‑stops, complaints, veneer spec

For each run, the platform records a performance and quality outcome vector along with contextual information. Outcomes include OEE broken down into availability, performance, and quality components; scrap and rework percentages; micro‑stop frequency and duration; and relevant customer‑complaint tags where available. Context features such as veneer species, nominal thickness, moisture range, product type, press parameters, shift, and order are added so that models can learn veneer‑ and product‑specific behavior instead of treating all production as homogeneous. Each run therefore becomes a structured data point: “recipe + context → performance and quality results.”

Technical Realisation

The recipe optimization engine turns everyday production into a continuous experimentation loop instead of relying on occasional test campaigns. Models are updated as new runs are completed, so recommendations gradually become more accurate and more specific to each veneer specification and product.

Treating production runs

as continuous experiments

Each production run is defined as a time window with a stable veneer specification and relatively stable recipe settings (for example, one order or a period with unchanged parameters). For that window, the system aggregates parameter statistics (means, ranges, standard deviations) together with outcome metrics such as OEE components, scrap, and micro‑stops. Variability within the run is kept as additional features, so the models can distinguish stable “good” operation from recipes that only work under very narrow conditions.

Supervised regression models are then trained on this run‑level dataset to learn how combinations of temperatures, speeds, pressures, binary modes, and veneer attributes map to performance and quality. These models act as surrogates for the real production line, allowing the system to explore “what‑if” adjustments in silico before suggesting them to operators. In this way, every completed run feeds back into the models, closing the loop between operation, learning, and improved future recipes.

Bayesian optimization of recipes

with constraints and uncertainty

On top of the surrogate models, Bayesian optimization is used to search for better parameter combinations while explicitly handling uncertainty and process limits. A Gaussian Process or similar Bayesian regression approximates the relationship between recipe parameters, veneer context, and target KPIs, and provides not only a predicted outcome but also an uncertainty band for each candidate recipe. This allows the optimization to be cautious in poorly explored regions and more adventurous where data is dense and predictions are reliable.

The objective function can combine multiple goals, for example maximizing OEE while keeping scrap below a threshold and limiting energy per square metre to a specified band. Hard constraints encode equipment limits and plywood/adhesive know‑how (maximum temperatures, allowed pressure ranges, safe speed envelopes), so proposed recipes are always technically feasible. An acquisition function such as Expected Improvement then selects parameter changes that promise the best trade‑off between exploring new regions and exploiting known good ones, e.g. “increase Zone 5 temperature by 10 °C and upper transport speed by 5%,” with predicted improvements in micro‑stops and scrap based on historical runs.

When a recommended recipe is applied on the line, the resulting run is automatically added back into the training data. The surrogate and Bayesian optimization logic are periodically retrained, so the system continuously adapts to new veneer sources, maintenance states, or process upgrades without requiring manual retuning.

Regression‑based insight and guardrails

for safe operating windows

In parallel with Bayesian optimization, more interpretable regression and response‑surface models provide insight into how individual parameters and small combinations affect outcomes. Partial‑dependence plots, local sensitivity curves, and response surfaces show, for example, how joint scrap or micro‑stops change as Zone 5 temperature or transport speed is varied for a given veneer type and moisture range. These views help engineers validate model behavior against plywood‑specific studies on pressing temperature, pressure, and time, and to distinguish causal effects from mere correlations.

Based on this understanding, engineers define guardrails: preferred “green zones” where parameters are known to be safe and effective, and wider “amber zones” where experiments are allowed under supervision. The optimization engine is restricted to these zones by default, and recipes that would push parameters outside them are either blocked or clearly flagged as requiring explicit approval. This guardrail concept ensures that AI‑driven exploration remains aligned with process safety, product standards, and equipment longevity.

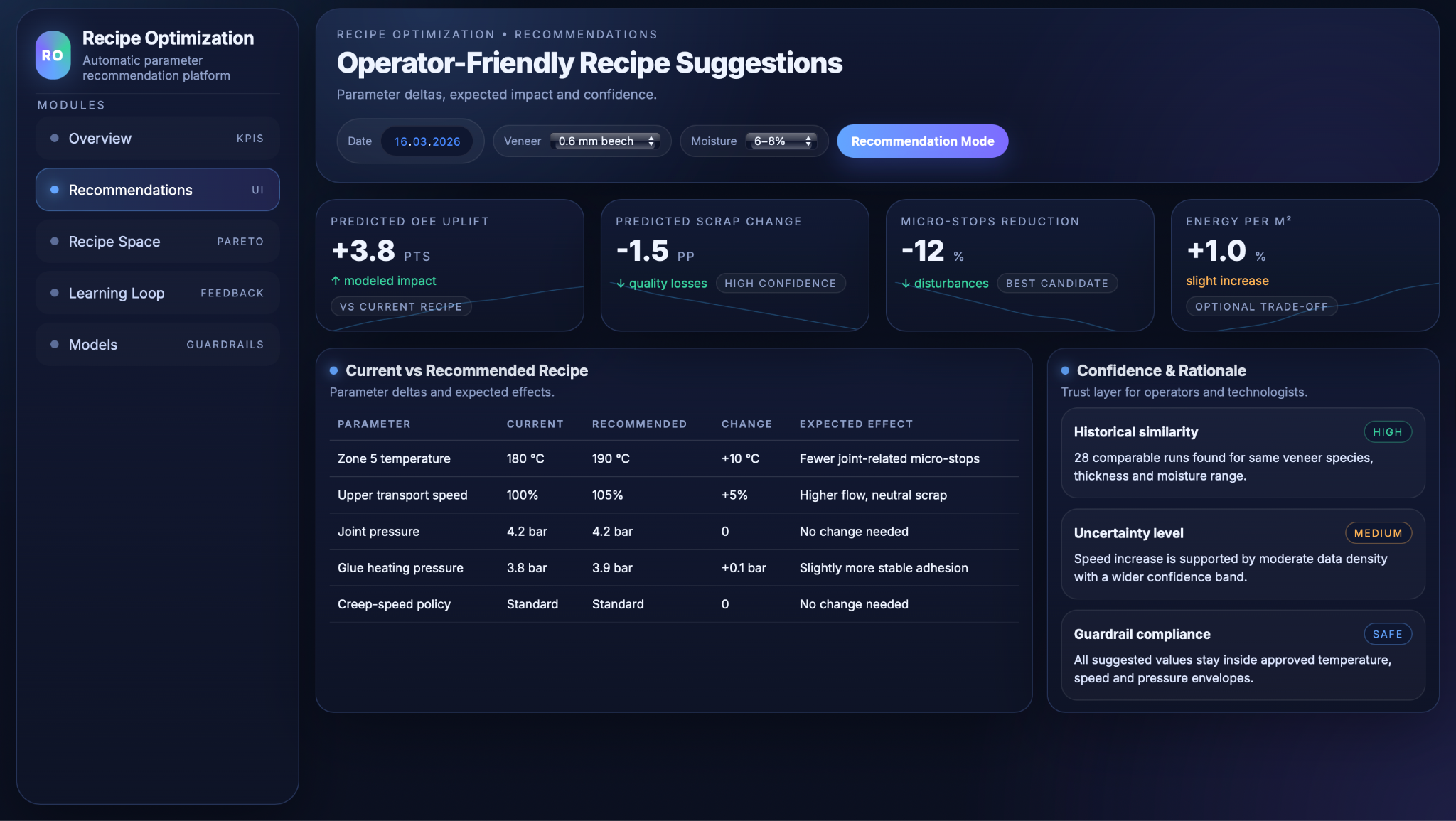

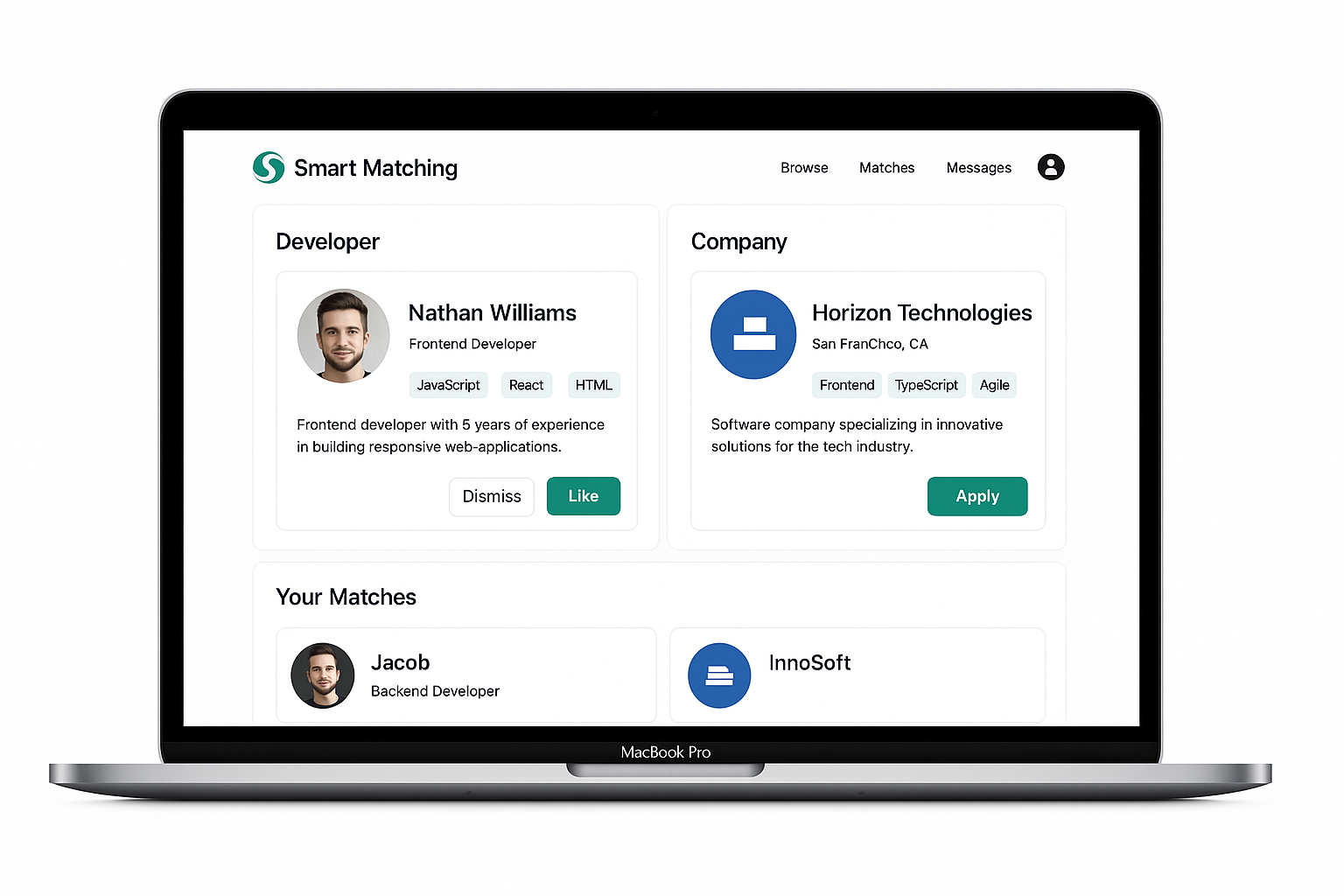

Operator and Engineer UI

The user interfaces are designed so that operators get simple guidance while engineers retain deeper control and visibility. Both views are built around the same underlying models, but surface different levels of detail and governance.

Operator recommendation view:

clear deltas and expected impact

For a selected veneer specification and moisture range, the operator screen shows the current standard recipe alongside the proposed settings. Key parameters such as zone temperatures, transport speeds, and pressures are displayed with clear deltas, for example “Zone 5: 180 °C → 190 °C” or “Upper transport: 100% → 105%,” so changes are easy to understand and apply. The expected effect of the new recipe is summarised in plain language, including indicators like “micro‑stops −12%,” “scrap −1.5 pp,” and “OEE +3.8 points,” together with a confidence level derived from historical similarity and data density.

Trust cues help operators judge whether to follow a suggestion: a confidence badge (high/medium/low), a short rationale (“based on 28 similar runs”), and a guardrail status (safe / inside approved envelopes). If a recommendation approaches the limits of the defined safe zones, the UI can require engineer confirmation or flag it as an experiment, keeping day‑to‑day operation straightforward while still enabling controlled exploration.

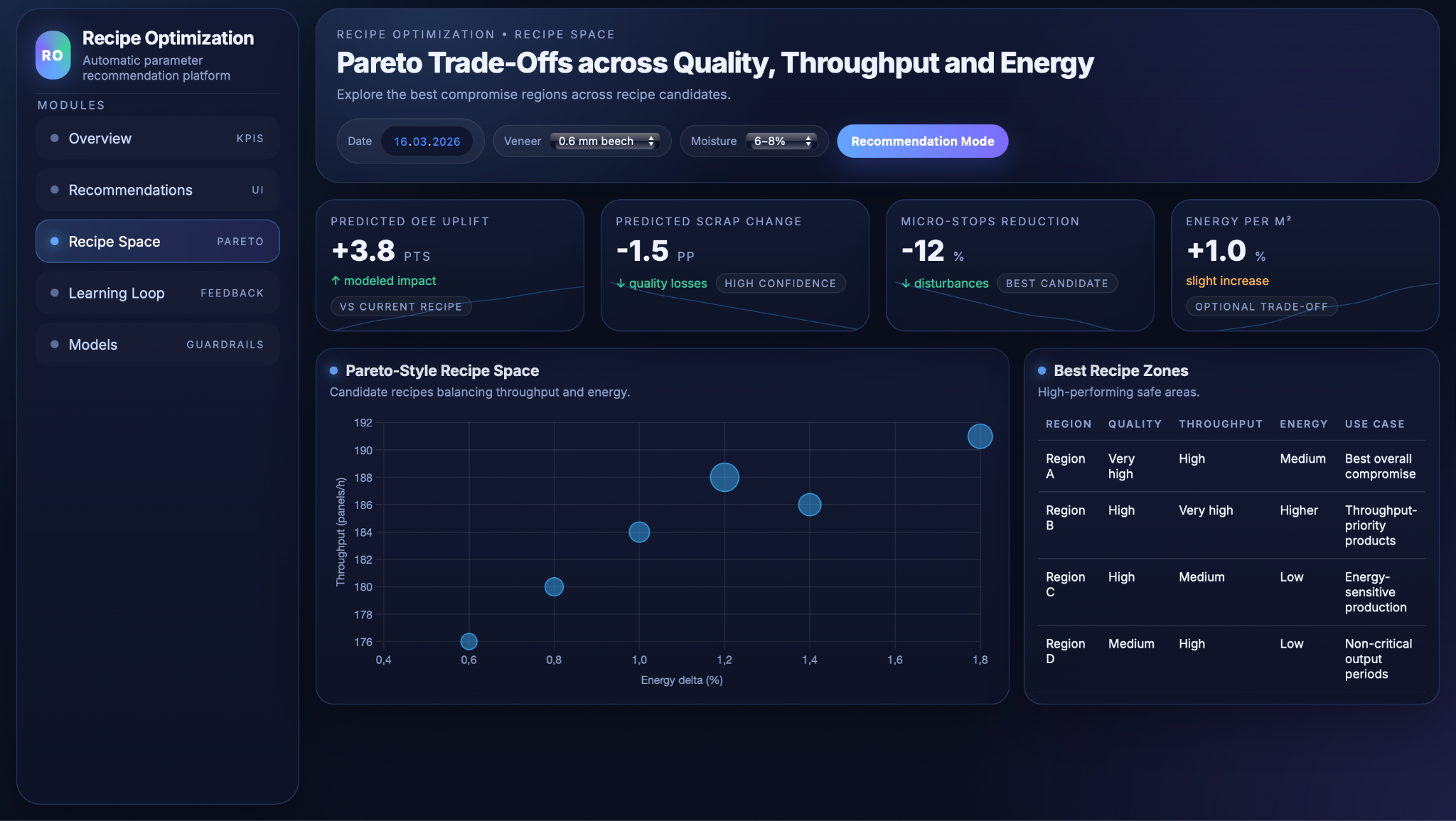

Engineering tools: parameter–performance dashboards and Pareto spaces

Engineers and technologists access more detailed dashboards that expose how parameters influence performance over many runs. Parameter–performance views show relationships such as OEE, scrap, or micro‑stops versus temperature, pressure, and speed ranges by veneer type, based on the fitted regression and Bayesian models. These views highlight “sweet spots” where the process achieves high quality and throughput at acceptable energy levels, and reveal regions where the line tends to be unstable or inefficient.

A dedicated Pareto space view visualises candidate recipes as points in a multi‑objective landscape, for example plotting throughput against energy use with colour or size indicating quality. Engineers can inspect clusters of high‑performing recipes, compare alternative trade‑off regions (e.g., “best overall compromise” vs “throughput‑priority recipes”), and decide which operating zones should become the new standard for specific products. This helps align optimisation decisions with business priorities such as cost, sustainability, or service‑level commitments.

Recipe management, approvals

and audit trails for CI projects

Recipe management functions allow engineers to move seamlessly from experimental settings to controlled standards. Once a suggested recipe has been tested and validated in production, it can be promoted to “standard” for a given veneer specification and product, with version tags and effective‑from dates. The system tracks deviations from standard in live production, making it visible when operators run alternative settings and how those choices affect OEE, scrap, or micro‑stops.

Approval workflows and audit trails support formal continuous‑improvement projects and quality systems. Each promoted recipe is linked to the evidence behind it: model predictions, relevant trial runs, before/after KPI shifts, and any required sign‑offs from process engineering or quality. This creates a documented chain from data‑driven recommendation to approved operating practice, reducing unstructured trial‑and‑error and making it easier to justify and revisit recipe decisions later.

Business Value and Conclusions

Higher OEE and fewer micro‑stops

with data‑driven tuning

Micro‑stops are well known as “silent killers” of OEE, often eroding 10–15% of performance by creating frequent short interruptions that traditional reporting underestimates. By explicitly modelling micro‑stops as a function of temperatures, speeds, and pressures, the recipe optimization can detect parameter combinations that systematically trigger these small disturbances and suggest alternatives that keep the line running more smoothly. As a result, the same installed equipment can deliver more net run time and higher effective capacity without major hardware investments.

Lower scrap and faster ramp‑up

for new veneer specs

Data‑driven recipe modeling has been shown to reduce scrap by linking process parameters and raw‑material characteristics to defect rates, enabling operators to intervene before defects occur rather than sorting them out afterward. In this use case, each combination of veneer species, thickness, and moisture range accumulates its own learnt “sweet spots,” so new veneer sources or products reach stable quality with fewer trial iterations. Multi‑objective and Bayesian approaches are already used in industrial process design to efficiently explore large parameter spaces with far fewer experiments than manual search, and the same principle applies here when tuning recipes for new products.

Energy and sustainability gains

through multi‑objective optimisation

Including energy per square metre or carbon footprint in the optimisation objective allows the system to surface recipes that maintain target quality and throughput while using less energy, rather than maximising throughput alone. In other industrial applications, multi‑objective Bayesian optimisation has successfully identified Pareto‑optimal operating points that trade off productivity against energy use or cost, demonstrating that such methods can systematically move processes toward more sustainable regimes. Applying the same logic on the production line means that over time, “best practice” recipes will tend to be not only high‑performing but also more energy‑ and resource‑efficient, supporting both cost and sustainability goals.

.avif)

.png)

.png)