The table of content

Introduction

Purpose, rationale and users

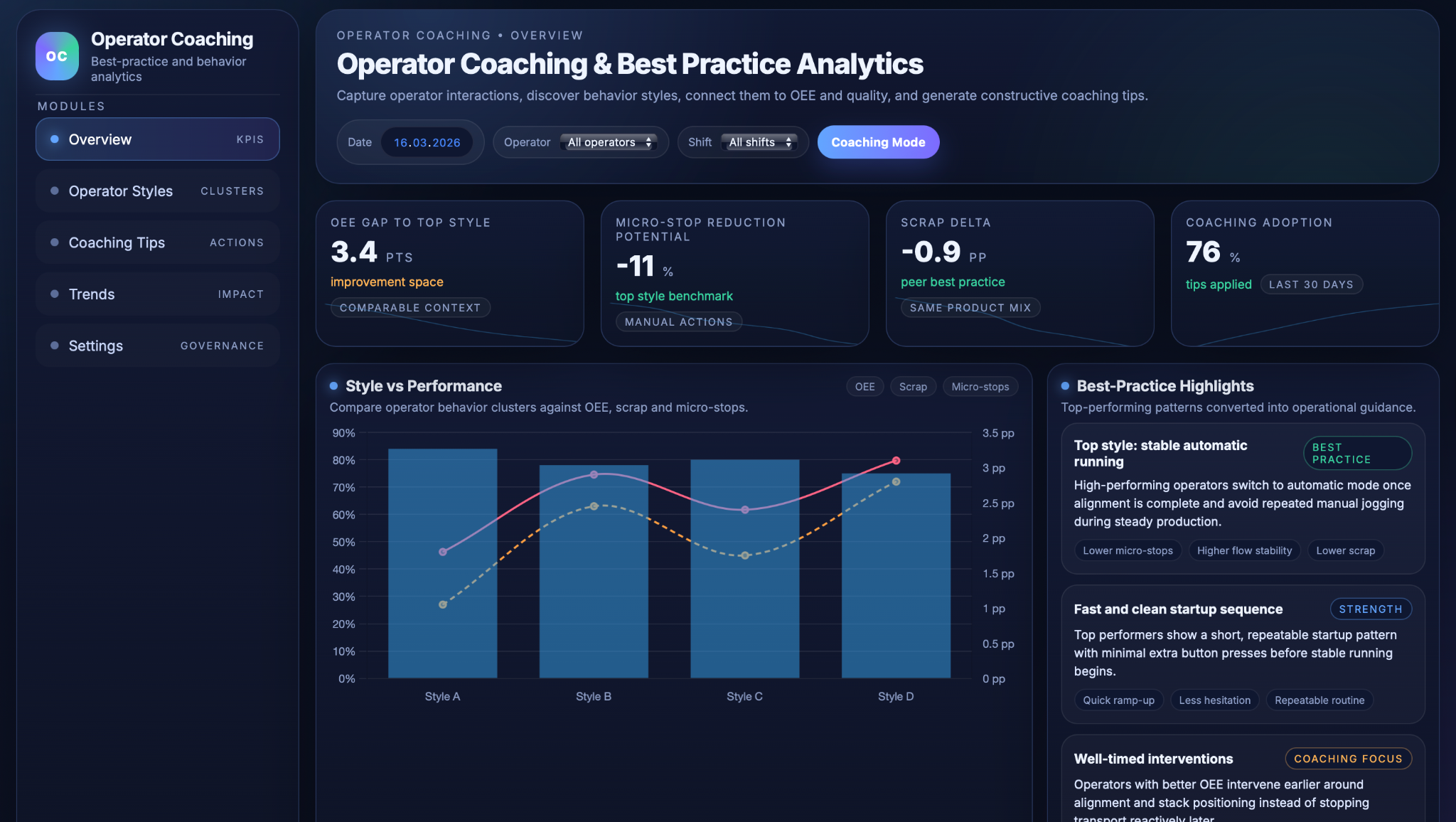

The Operator Coaching & Best Practice Analytics use case focuses on turning detailed operator interactions into actionable insights that stabilise performance across shifts. Its purpose is to standardise effective operating practices and reduce operator‑induced variability in OEE, scrap and micro‑stops by making real behaviour patterns visible instead of relying only on averages or anecdotal feedback.

In many plants, operators running the same line and product can still deliver noticeably different results, because their habits around mode changes, jogging, stops and startup sequences vary and are rarely measured systematically. This use case captures those interactions directly from HMI/PLC logs, links them to machine context and outcomes, and uses analytics to identify recurring “styles” of operating the line. The goal is not to monitor individuals for punishment, but to uncover which patterns genuinely support stable automatic running, lower micro‑stops and fewer quality issues.

The key objectives are to understand and cluster operator styles, quantify how each style correlates with OEE, scrap and micro‑stops under comparable conditions, and translate the findings into constructive, individualised coaching tips. Front‑line operators receive clear, practical suggestions grounded in their own data; supervisors and trainers gain a structured view for reviews and training plans; and production and CI leaders get a transparent link between behaviour, coaching and measurable performance changes.

Data Used

Operator actions, context and outcomes

The Operator Coaching & Best Practice Analytics use case combines detailed operator interaction logs with machine context and performance data so that each shift becomes a learnable experiment rather than just a result. Instead of relying on subjective impressions of “good” or “less good” operators, it builds a structured dataset that links “who did what, in which mode, on which product” to OEE, scrap and micro‑stops.

Operator inputs and mode selections are captured directly from HMI/PLC logs. This includes push buttons and switches such as automatic start/stop, heating on/off, head‑lift up/down, alignment functions, clamp and shear controls, transport pressure buttons, and input forward/backward or jog commands, as well as mode toggles like creep versus automatic or other line‑specific modes. Each action is time‑stamped and, where possible, linked to operator ID, terminal, machine area and active product or order, creating a granular record of how each person actually runs the line.

Machine and process context provides the backdrop for interpreting these actions. For each period and event, the system records machine status (running, minor stop, micro‑stop, alarm), line speed, current recipe or product, and relevant process events such as light‑barrier trips, automatic stops or changeovers. This makes it possible to see, for example, whether frequent jog cycles occur mainly during startup, during normal steady‑state running, or in response to specific disturbances.

Performance and quality metrics are then joined to these interaction streams. OEE components (availability, performance, quality), micro‑stop counts and durations, and scrap and rework rates are calculated at appropriate time windows and product groupings; optionally, safety events and near‑misses can be included to ensure that coaching reinforces safe practices rather than encouraging risky shortcuts. Together, this creates a rich interaction log that answers “who did what, when, in which context, and with what outcome,” forming the basis for clustering operator styles and generating personalised coaching.

Technical Realisation

Sequence mining, style performance

and coaching

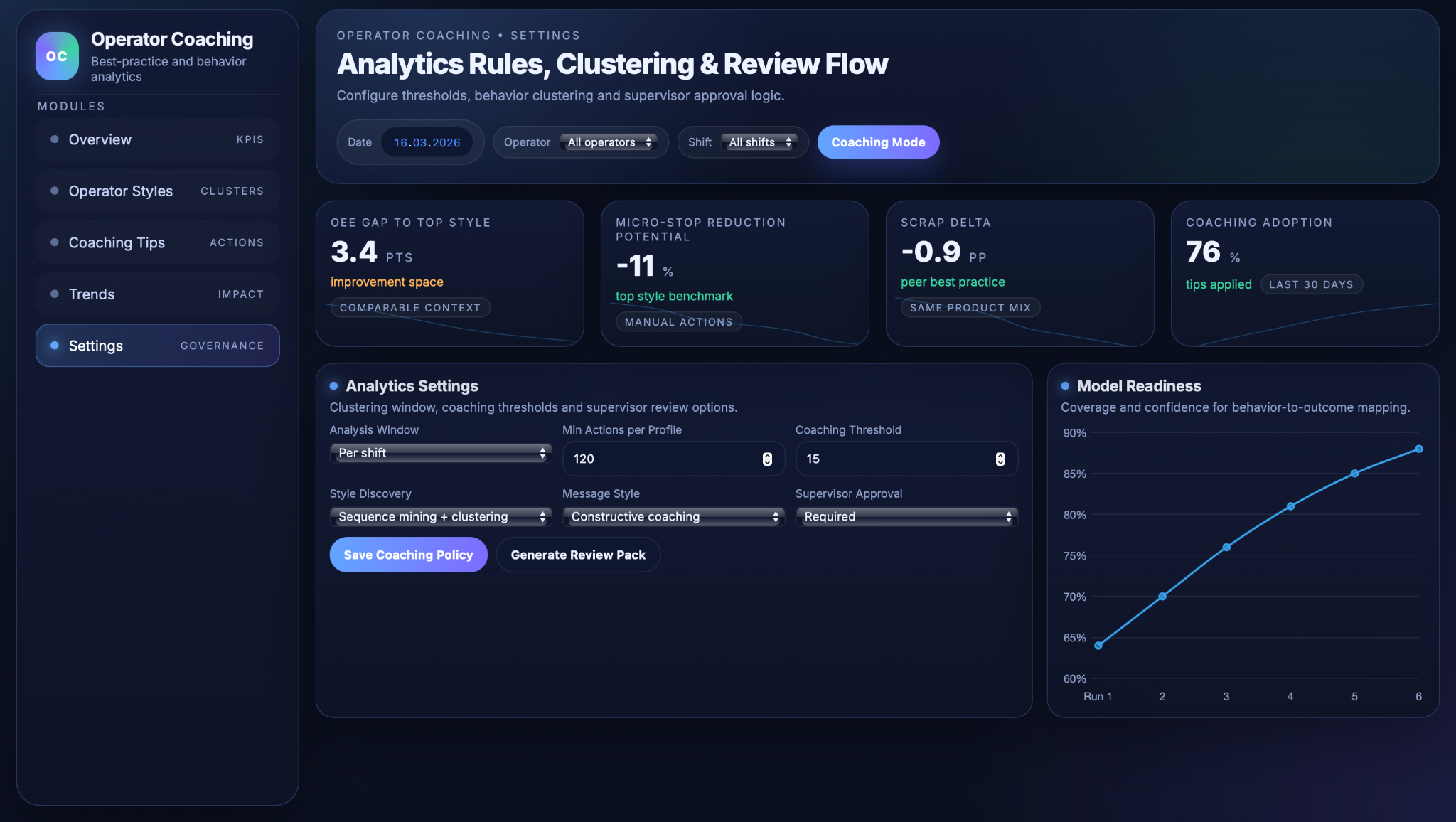

The Operator Coaching & Best Practice Analytics engine turns raw interaction logs into operator “styles,” quantifies how those styles relate to outcomes, and then translates the findings into individual coaching suggestions. It builds on the detailed action, context and performance data described above.

First, sequence mining and clustering are used to discover and characterise operator behaviour patterns. For each operator and analysis window (for example, a shift or a product run), the system constructs time‑ordered sequences of actions such as “auto start → head‑lift down → alignment → input forward → transport stop → input backward,” aligned with machine states like startup, normal run, changeover or troubleshooting. Sequential pattern‑mining methods identify frequent motifs and action orders, for instance repeated jog cycles during what should be steady automatic running. Using features such as the rate of manual stops, jogs, mode changes and the presence of specific motifs, operators are grouped into clusters that represent distinct “styles,” such as a mostly automatic, low‑intervention style versus a highly manual, frequently jogging style.

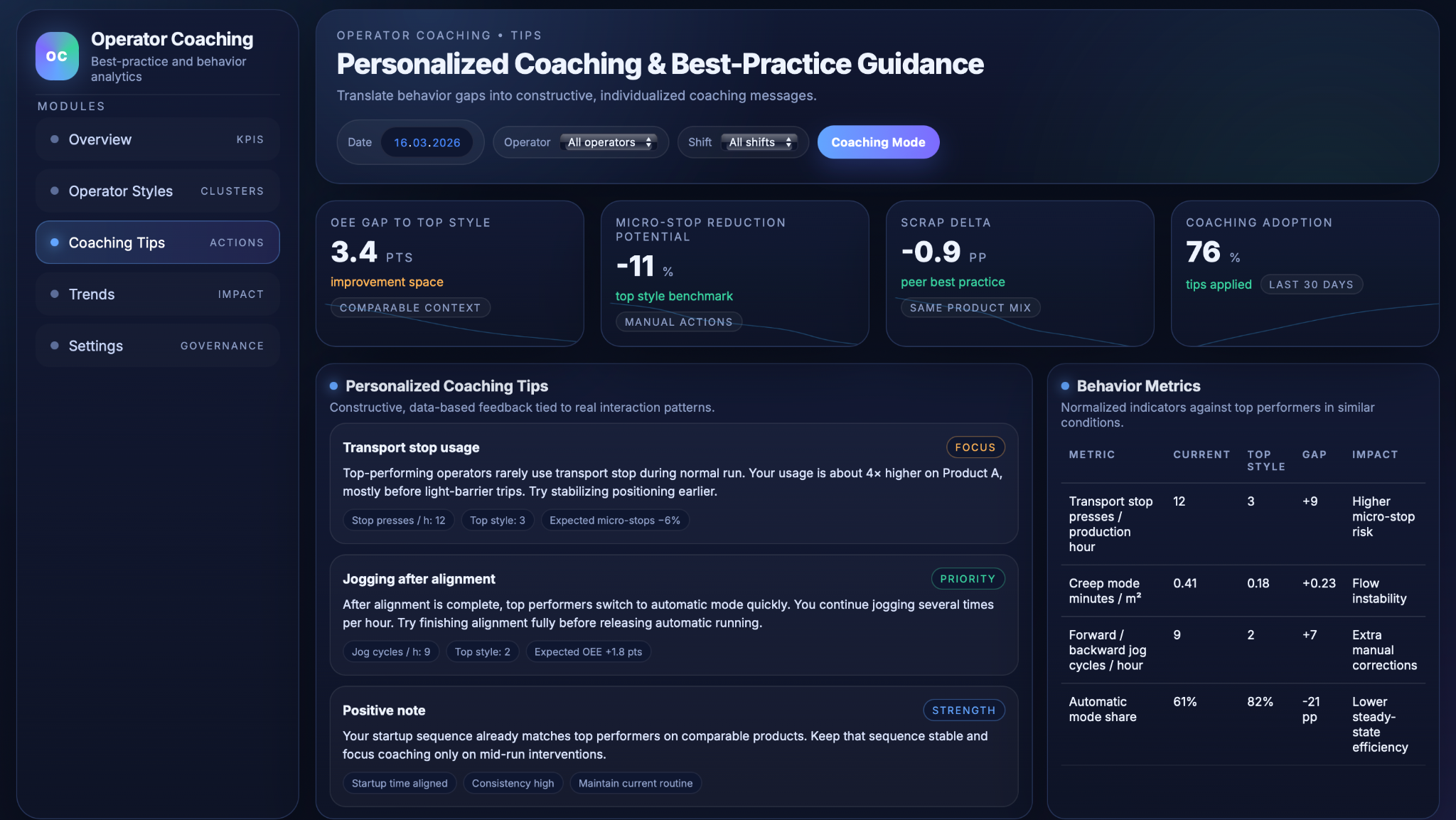

Second, these style clusters are correlated with performance and quality under comparable conditions. For each style, the system calculates average OEE, micro‑stop rate, scrap percentage, changeover times and minor‑stop structure, always comparing within similar product types, veneer specs or shifts so that behaviour is not confounded with product mix or schedule. This analysis reveals which styles tend to be associated with higher micro‑stop rates or quality losses (for example, frequent transport‑stop usage and back‑and‑forth jogging) and which styles support smoother flow and lower scrap (for example, stable automatic mode with well‑timed, fewer interventions). In addition, simple normalised indicators such as “transport stop presses per production hour,” “creep‑mode minutes per m²” or similar behaviour metrics are derived per operator to make the link between specific actions and OEE or micro‑stops tangible.

Third, the system generates individualised coaching tips and supports supervisor review. For each operator, a behaviour profile shows how their key metrics compare to top‑performing peers in comparable contexts, highlighting both strengths and outliers. Using templates or an LLM, these differences are turned into constructive coaching messages, for example suggesting less reliance on transport stop by improving stack positioning, or encouraging earlier switch‑over to automatic mode once alignment is complete. Messages are phrased to reinforce safe practices and to recognise existing strengths (such as good startup routines), and they explicitly avoid “optimising away” safety‑critical actions. Supervisors or trainers can review and adjust suggested tips before they are shared, ensuring that coaching remains aligned with local SOPs, culture and formal training programmes.

Dashboards and Training Integration

Operator dashboards, playbooks and reviews

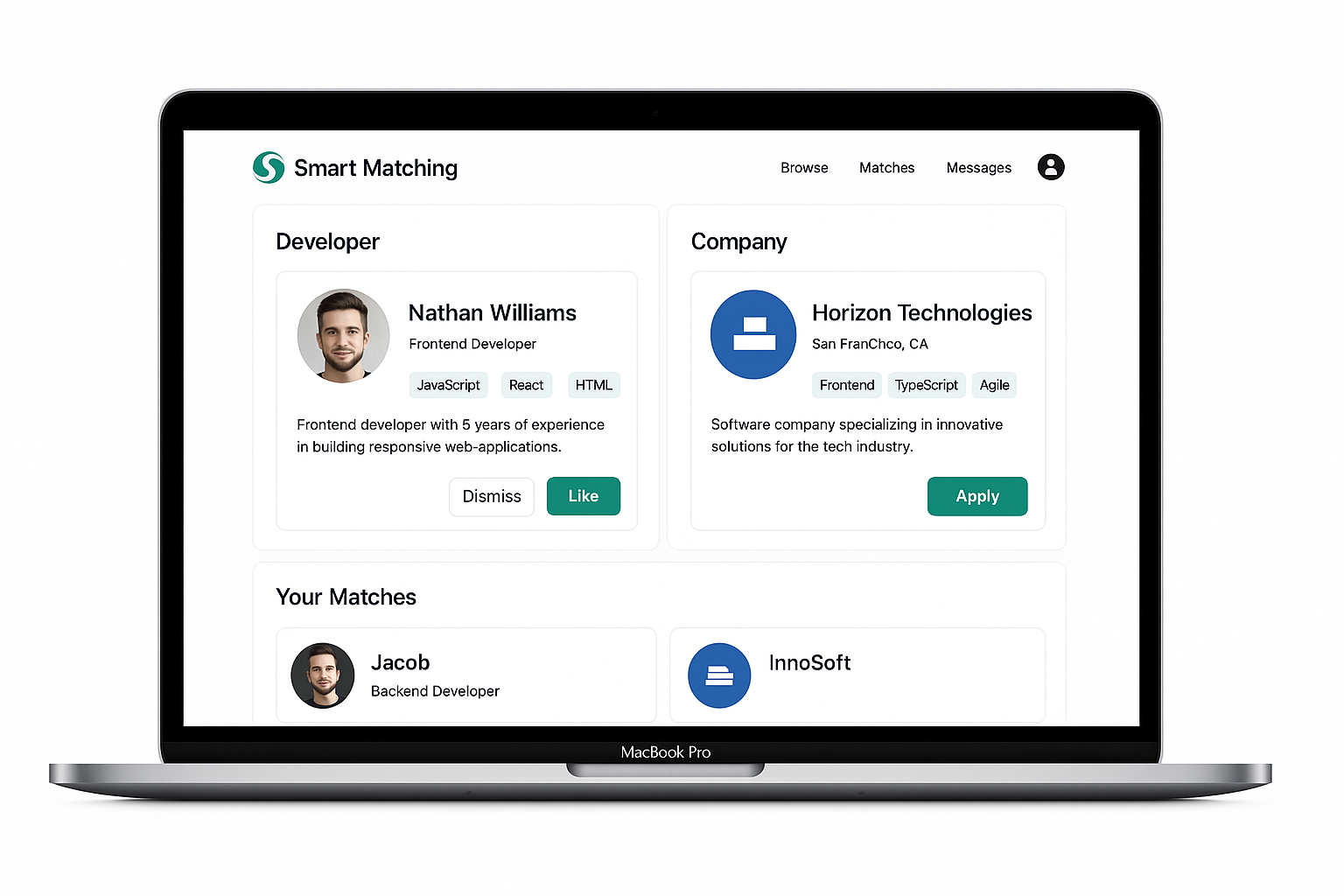

The Operator Coaching & Best Practice Analytics module exposes its insights through dashboards that connect day‑to‑day behaviour with longer‑term coaching and training. The goal is to make operator styles, coaching effects and governance rules visible in one place so that improvements can be tracked rather than assumed.

The operator performance dashboard gives per‑operator views of OEE, scrap, micro‑stops and key behaviour metrics over time. Trend lines show whether coaching is leading to more stable, high‑performing behaviour, and allow supervisors to distinguish between one‑off bad days and persistent patterns that require support. This kind of operator‑level dashboard is increasingly recommended as a way to identify top performers with shareable best practices and to detect where additional guidance is needed early.

Best‑practice extraction turns the observed behaviour of high‑performing styles into reusable guidance. Patterns from top operators are distilled into standard “playbooks” or SOP refinements, such as preferred startup sequences, typical mode‑switching logic, or effective ways to handle stack changes without excessive jogging. Training content and digital work instructions can then be updated to reflect these proven routines, so that future operators do not have to rediscover them from scratch.

The same data supports targeted onboarding and structured performance reviews. New operators can be guided towards effective styles more quickly by comparing their early behaviour profiles with those of top performers on similar products, while experienced operators receive specific, observable focus points rather than generic feedback. During periodic reviews, supervisors and operators can look at shared dashboards, discuss concrete behaviour metrics and associated impact on OEE, scrap and micro‑stops, and agree on coaching actions that will be tracked over subsequent weeks.

Business Value

Reduced operator‑induced variability

By making real operating styles visible and linking them to outcomes, the use case helps align operators around proven best practices rather than personal habits. Differences in OEE, scrap and micro‑stops between shifts shrink as more people converge on stable automatic running, well‑timed interventions and consistent startup/changeover routines.

Fewer micro‑stops and smoother flow

The analytics highlight behaviours that quietly generate micro‑stops, such as excessive transport‑stop usage, back‑and‑forth jogging or frequent mode switching during normal run. Coaching then focuses on replacing these patterns with steadier, best‑practice sequences, reducing “silent” OEE losses and making flow more predictable for planning and downstream processes.

Faster onboarding and continuous upskilling

New operators receive concrete, data‑based guidance from day one: which sequences to follow, which actions to avoid over‑using, and what “good” looks like for specific products. Experienced operators benefit from targeted coaching instead of generic training, so effort goes into behaviours with demonstrated impact, accelerating skill development across the team.

Stronger data‑driven and learning culture

Because feedback is grounded in objective interaction data and framed as supportive coaching, not surveillance, it encourages open discussion of operating practices. Over time, this normalises the idea that behaviours can be measured, compared and improved, reinforcing a culture where teams regularly review their own data, adjust routines and share successful patterns with peers.

.avif)

.png)

.png)